Material’s April updates protect the back door, proactively harden the cloud workspace, and simplify SecOps.

Material's had a big April, and our updates this month span the full attack surface — proactive VIP recommendations, a rebuilt Integrations experience, a new default-on detection that closes a frequently-exploited gap in Google calendar security… along with announcing our new OAuth Remediation Agent that autonomously manages third-party app risk.

Security tools tend to reward the people who already know what to look for. The analysts who know the right query, the admins who remember to update the VIP list, the teams who got around to finishing the integration setup.

We think that's backwards. The best version of Material is one that does more of the work before you have to ask — that surfaces the right risk before it becomes an incident, that makes configuration feel like an answer instead of a question. This month's updates are built around that idea.

Introducing the OAuth Remediation Agent

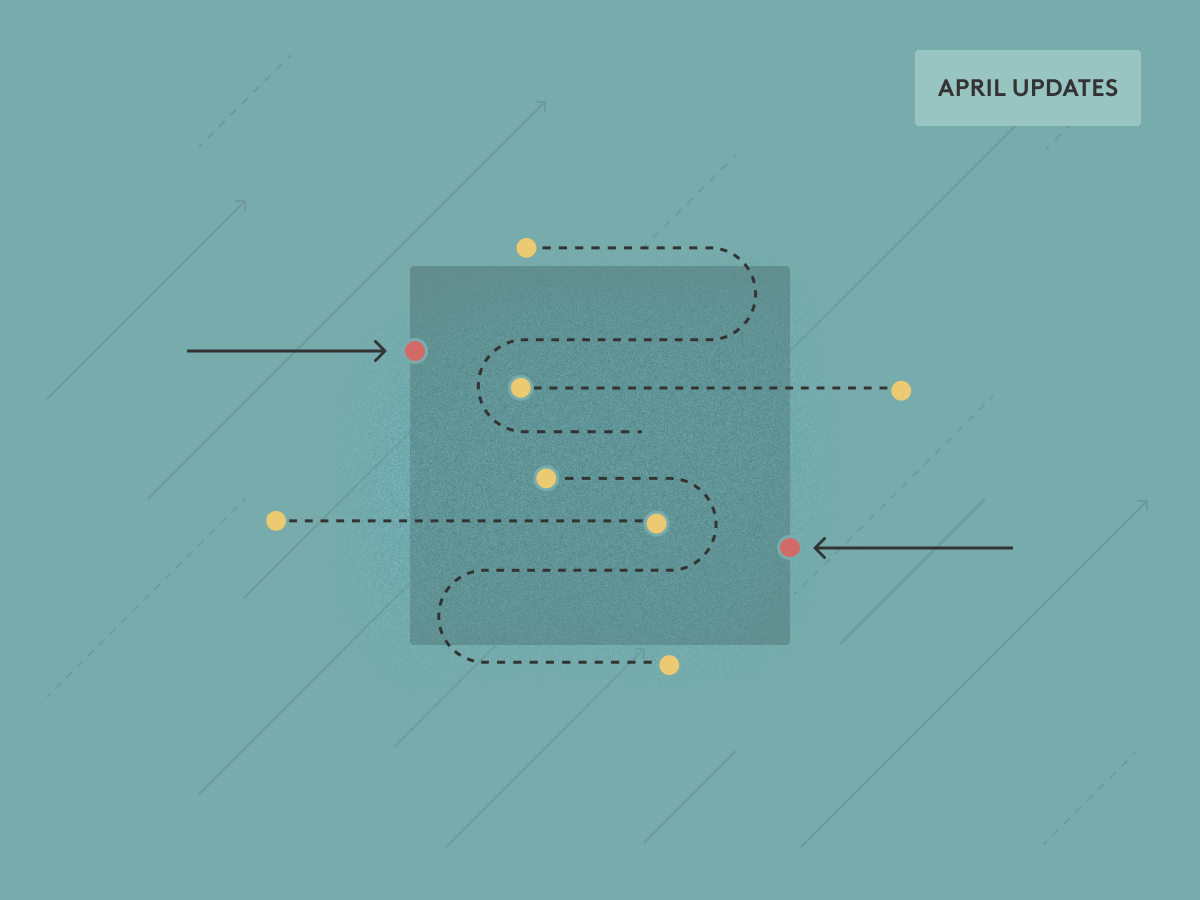

Security teams have spent years hardening the front door. The back door — third-party OAuth connections — has been largely managed by spreadsheet, periodic manual reviews, and good intentions that don't scale.

The problem is structural. Every new SaaS tool and AI agent your users adopt requires a handshake with your corporate data. Most organizations have hundreds of these connections, with limited visibility into what those apps are actually doing, who they were granted by, and whether they still need to exist. A permission list tells you what an app *could* do. It says nothing about what it *is* doing.

Material's new OAuth Remediation Agent moves OAuth management from periodic review to continuous action. The agent does three things: it identifies every new app connection the moment a user clicks Allow, assesses contextual risk based on actual API behavior and the blast radius of the user who authorized it, and automatically revokes tokens for dormant or risky apps. For ambiguous cases, it routes verification directly through Slack — keeping a human in the loop without turning your team into an app-review assembly line.

This was already an emerging threat vector, and AI is only exacerbating the problem. The rate at which AI agents are acquiring OAuth access to production environments is unprecedented, their behavior isn’t as predictable as static SaaS apps, and most organizations have no scalable way to vet or monitor them. The OAuth Remediation Agent is built for exactly this environment — one where the attack surface grows every time someone installs a productivity extension.

See the full story on how it works and what it covers.

A rebuilt integrations experience

The Integrations page has been rebuilt from the ground up. The new design puts the destination front and center: you pick what you're connecting to — messaging platforms, ticketing systems, identity providers, SIEM/SOAR platforms — then choose which events to route there. Native integrations now ship with recommended default event configurations, so you can be up and running without needing to become an expert in Material's event taxonomy before you've sent your first alert. And if your tool isn't on the native list, custom integrations are available — if it can receive a webhook, it can receive a Material event.

Know your VIPs before attackers do

VIP impersonation is one of the highest-yield tactics in the attacker playbook. An email that appears to come from your CFO or General Counsel is effective because it’s an easy social engineering vector: but they’re easily detectable if you know who your most impersonated users are.

Material now surfaces VIP recommendations automatically. Over a rolling 30-day window, Material scores accounts on four signals that correlate with targeting risk: volume of messages from first-time sender addresses, inbound messages from freemail providers like Gmail and Yahoo, detections with sensitive tags, and total suspicious detections. Those signals are weighted into a composite percentile score calculated relative to the rest of your organization — so you're seeing who's unusually exposed, not just who's busy.

When Material identifies likely VIP candidates, a banner surfaces in the Accounts tab for admins with the right permissions. A single review modal shows the suggested accounts, lets you select the ones you want to add, and adds them to your VIP impersonation list in one click — or dismiss the suggestions if they don't reflect your environment. Nothing is added automatically. This is a recommendation, not an override.

The result: a VIP list that reflects who attackers are actually targeting, updated continuously rather than set once and left to go stale.

Closing the calendar gap

We've written before about calendar-based phishing — attackers sending malicious invites that land on calendars in ways that bypass inbox defenses. The February release added automated calendar remediation to clean up after a phishing event. This month, we're addressing a root-cause configuration that makes organizations significantly more vulnerable in the first place.

When a Google account is configured to automatically accept meeting invitations from anyone, attackers don't need the user to click Accept. The invite just appears. It's a setting users can enable themselves, even when organizational policy says otherwise — and it's a known vector for BEC and social engineering: get the meeting on the calendar, and the malicious content comes with it.

Material now ships a default-on detection that identifies Google accounts using this configuration. When it fires, you get clear visibility into which accounts are affected, along with remediation guidance that points users and admins to the right Google calendar controls to fix it. The detection applies to active Google accounts across Essentials, and Advanced.

These updates are all pointed in the same direction: making Material work harder so you don't have to. Proactively flagging who's at risk, making the integration story simpler, and closing gaps before they become incidents. Reach out for a demo to see them live.